Revolutionary AI Maps our Galaxy’s 100 billion Stars Faster than Ever

AI-Powered Leap in Milky Way Modeling

A research team led by Keiya Hirashima at the RIKEN Center for Interdisciplinary Theoretical and Mathematical Sciences (iTHEMS) in Japan—working with collaborators from The University of Tokyo and the Universitat de Barcelona in Spain—has developed the first Milky Way simulation capable of following more than 100 billion individual stars across 10,000 years. This breakthrough was made possible by integrating artificial intelligence (AI) with advanced numerical techniques, enabling a model with 100 times more stars than previous state-of-the-art simulations and completing the calculations over 100 times faster.

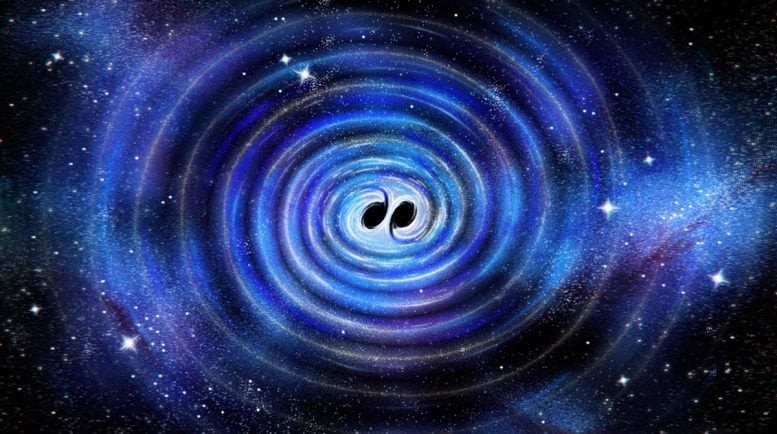

Figure 1. AI Breakthrough Charts 100 billion Milky Way Stars at Record Speed.

For decades, astrophysicists have sought a Milky Way model precise enough to track each star’s unique trajectory. Such a simulation would offer a powerful tool for testing theories about galaxy formation, structural evolution, and stellar life cycles, while providing a basis for comparison with observational data. Achieving this, however, is notoriously difficult, as it requires accounting for gravity, fluid dynamics, supernova effects, and element formation—each operating across vastly different scales of time and space, making the calculations extraordinarily complex. Figure 1 shows AI Breakthrough Charts 100 billion Milky Way Stars at Record Speed.

Why Simulating Every Star Is So Hard

Until now, researchers have been unable to model a galaxy as large as the Milky Way while retaining the fidelity needed to track individual stars. Previous state-of-the-art simulations topped out at roughly one billion solar masses—far short of the Milky Way’s more than 100 billion stars.

Because of this limitation, each “particle” in earlier models represented a cluster of around 100 stars rather than a single one. These averaging hides important small-scale processes, meaning only broad galactic structures could be simulated accurately. The main bottleneck is the timestep required between each calculation: rapid, small-scale changes—such as those involved in supernova explosions—can only be captured if the simulation proceeds in extremely fine increments.

The Computational Wall: Why Traditional Supercomputers Fall Short

Shrinking the timestep, however, dramatically increases the computational burden. Even the most powerful physics-based simulations available today would require 315 hours to model just 1 million years of Milky Way evolution at individual-star resolution. At that rate, completing a 1-billion-year simulation would take more than 36 years of real time. Simply adding more supercomputer cores isn’t a viable solution, as energy demands become enormous and performance gains stop scaling efficiently.

To overcome these constraints, Hirashima and colleagues created a hybrid framework that merges a deep learning surrogate model with conventional physics simulations. The surrogate model was trained on high-resolution supernova simulations, learning how gas expands over the 100,000 years following an explosion—without relying on full-scale computation each time. This AI-assisted shortcut allows the system to simulate both large-scale galactic motions and the fine-scale physics of events like supernovae.

The team validated their approach by comparing simulation results to large-scale tests run on RIKEN’s Fugaku supercomputer and The University of Tokyo’s Miyabi system.

Deep Learning Breakthrough for Supernova-Driven Dynamics

This method not only enables individual-star accuracy in galaxies exceeding 100 billion stars but also slashes computation time. Simulating 1 million years took just 2.78 hours—meaning that a full 1-billion-year simulation could be finished in roughly 115 days instead of 36 years.

Beyond astronomy, this hybrid approach could revolutionize other multi-scale simulations—such as climate, ocean, and atmospheric modeling—that require tight coupling between small-scale and large-scale processes.

A New Era for Galaxy Evolution and Climate-Scale Modeling

“Incorporating AI into high-performance computing represents a fundamental change in how we approach multi-scale, multi-physics challenges across computational science,” Hirashima says. “This work shows that AI-accelerated simulations can go beyond pattern recognition to become true engines of scientific discovery—helping us trace how the very elements that give rise to life emerged in our galaxy.”

Source: SciTECHDaily

Cite this article:

Priyadharshini S (2025), Revolutionary AI Maps our Galaxy’s 100 billion Stars Faster than Ever, AnaTechMaz, pp.609