Network and Cloud Impact of Agentic AI

Traditionally, enterprises have treated AI as a specialized component within specific business processes—far from the ever-present assistant that consumer users interact with. In enterprise settings, this is referred to as an “agentic” AI model. Such agents may engage directly with people, software workflows, or even other agents, but they almost always rely on enterprise data. This distinction from the typical online chat-style AI is crucial, as it has significant implications for enterprise network traffic, infrastructure demands, and overall budgets.

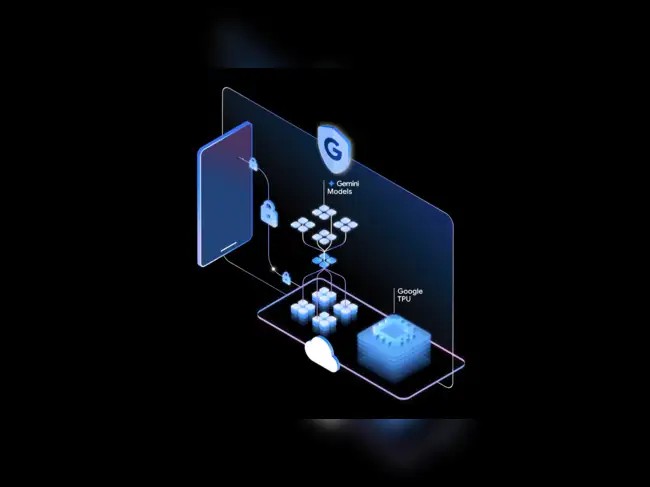

Figure 1. How Agentic AI Is Reshaping Enterprise Networks and Cloud Systems.

Agentic AI systems are typically built on pre-trained “foundation models,” meaning their demand for data is continuous rather than limited to the initial training phase. While these agents resemble software components more than generative AI services, they differ fundamentally in how they access enterprise data. As a result, traditional software-centric methods for evaluating traffic load, cost implications, governance, and security will require special consideration. Figure 1 shows How Agentic AI Is Reshaping Enterprise Networks and Cloud Systems.

Unlike conventional software, AI agents don’t include explicit read or write operations. Instead, they rely on the Model Context Protocol (MCP) to connect with company data—indirectly, through an MCP server and a set of “tools” that bridge the model and data sources. The model interacts with the tool, the tool communicates with the server, and the server retrieves the data. Any modification to the server or toolkit can alter data access patterns—changing what data is used, where it resides, or how much is accessed—introducing a new kind of “chain” behavior unique to AI agents.

Enterprises describe MCP tools as deceptively powerful. They act as proxies for broad capabilities—such as database queries, event triggers, updates, and even operational actions. When an employee uses an AI agent connected to an MCP server and its tools, they indirectly gain the same level of access and authority those tools possess. Often, users aren’t even aware of this, since the model’s interaction with the tools and data occurs within an opaque “black box.”

This chain of dependency between agents, servers, tools, and data makes it difficult to measure and manage the resulting data traffic. In practice, enterprises rarely rely on a single tool or MCP server, further complicating oversight. A sound policy framework should define access hierarchically—first by which MCP servers a model can connect to, then by which tools those servers can use, and finally by which datasets those tools can reach. This layered control helps prevent accidental exposure of sensitive data or unintended permission escalation.

The chain analogy is key: realistic AI agent applications depend on access to core enterprise databases, meaning the agent, MCP server, tools, and data are all interlinked—and the network is the connective tissue binding them.

Enterprises have been surprised by how much network traffic this setup generates. Many initially envisioned their AI workloads running on isolated “AI clusters” loosely connected to the main data center. In reality, agentic AI has driven a shift toward smaller AI servers deployed within core data centers, resulting in all model-related traffic—input, inference, and data exchange—flowing through the main enterprise network.

This has validated early vendor warnings that AI networking demands could be profound. A single agent query can scan millions of database entries, consuming as much bandwidth as an entire week of normal business activity. Such loads can degrade network performance and Quality of Experience (QoE) for other applications. When this traffic extends into or out of cloud environments, costs can spike dramatically—so much so that about one-third of enterprises report AI-driven traffic surges significant enough to cause network congestion or trigger financial audits.

Finally, security and governance pose major challenges. MCP implementations don’t always enforce strong authentication, and since tools can modify data, a compromised or poorly designed tool could corrupt, fabricate, or delete information. To mitigate this, enterprises recommend restricting AI agents from using tools that can alter data or trigger real-world actions unless there’s strict oversight of both tool design and agent behavior—to ensure the system remains reliable and secure.

Source: NETWORK WORLD

Cite this article:

Priyadharshini S (2025), Network and Cloud Impact of Agentic AI, AnaTechMaz, pp.169