Nvidia New Technology to Speed Up AI

The H100 chip will be produced on Taiwan Manufacturing Semiconductor Company's cutting edge four nanometre process with 80 billion transistors.

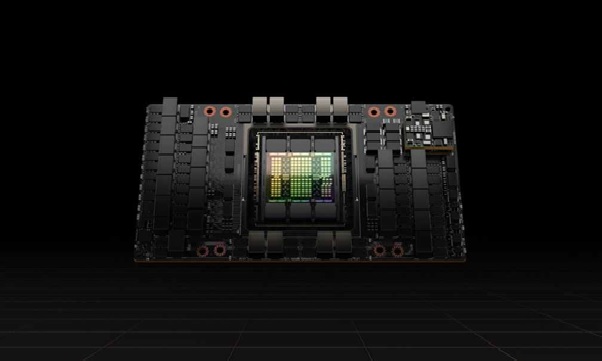

Figure 1: Nvidia unveils latest chips to speed up AI computing.

Nvidia Corp on Tuesday announced several new chips and technologies that it said will boost the computing speed of increasingly complicated artificial intelligence algorithms, stepping up competition against rival chipmakers vying for lucrative data centre business.

Nvidia's graphic chips (GPU), which initially helped propel and enhance the quality of videos in the gaming market, have become the dominant chips for companies to use for AI workloads.

The latest GPU, called the H100, can help reduce computing times from weeks to days for some work involving training AI models, the company said. [1]

"Data centres are becoming AI factories — processing and refining mountains of data to produce intelligence," said Nvidia Chief Executive Officer Jensen Huang in a statement, calling the H100 chip the "engine" of AI infrastructure.

Companies have been using AI and machine learning for everything from making recommendations of the next video to watch to new drug discovery, and the technology is increasingly becoming an important tool for business.

The H100 chip will be produced on Taiwan Manufacturing Semiconductor Company's cutting edge four nanometre process with 80 billion transistors and will be available in the third quarter, Nvidia said.

The H100 will also be used to build Nvidia's new "Eos" supercomputer, which Nvidia said will be the world's fastest AI system when it begins operation later this year.

Facebook parent Meta announced in January that it would build the world's fastest AI supercomputer this year and it would perform at nearly 5 exaflops. Nvidia on Tuesday said its supercomputer will run at over 18 exaflops.

Exaflop performance is the ability to perform 1 quintillion - or 1,000,000,000,000,000,000 - calculations per second.[2]

Nvidia says an H100 GPU is three times faster than its previous-generation A100 at FP16, FP32, and FP64 compute, and six times faster at 8-bit floating point math.

The company also announced a new data centre CPU, the Grace CPU Superchip, which consists of two CPUs connected directly via a new low-latency NVLink-C2C.

The chip is designed to “serve giant-scale HPC and AI applications” alongside the new Hopper-based GPUs and can be used for CPU-only systems or GPU-accelerated servers. It has 144 Arm cores and 1TB/s of memory bandwidth.

During his keynote, Nvidia CEO Jensen Huang did say that Eos, when running traditional supercomputer tasks, would rack 275 petaflops of compute — 1.4 times faster than “the fastest science computer in the US” (the Summit).

“We expect Eos to be the fastest AI computer in the world,” said Huang. “Eos will be the blueprint for the most advanced AI infrastructure for our OEMs and cloud partners.” [3]

References:

- https://www.deccanherald.com/business/technology/nvidia-unveils-latest-chips-technology-to-speed-up-ai-computing-1093734.html

- https://finance.yahoo.com/news/nvidia-unveils-latest-chip-speed-154022782.html

- https://www.theverge.com/2022/3/22/22989182/nvidia-ai-hopper-architecture-h100-gpu-eos-supercomputer

Cite this article:

Sri Vasagi K (2022), Nvidia New Technology to Speed Up AI, p.p 74