Real GPU Cost Savings in the Cloud – Perfect for Agile Teams

Cloud-based GPU computing has become more affordable over the past year, offering tangible cost savings for organizations that can adapt quickly to leverage compute power effectively.

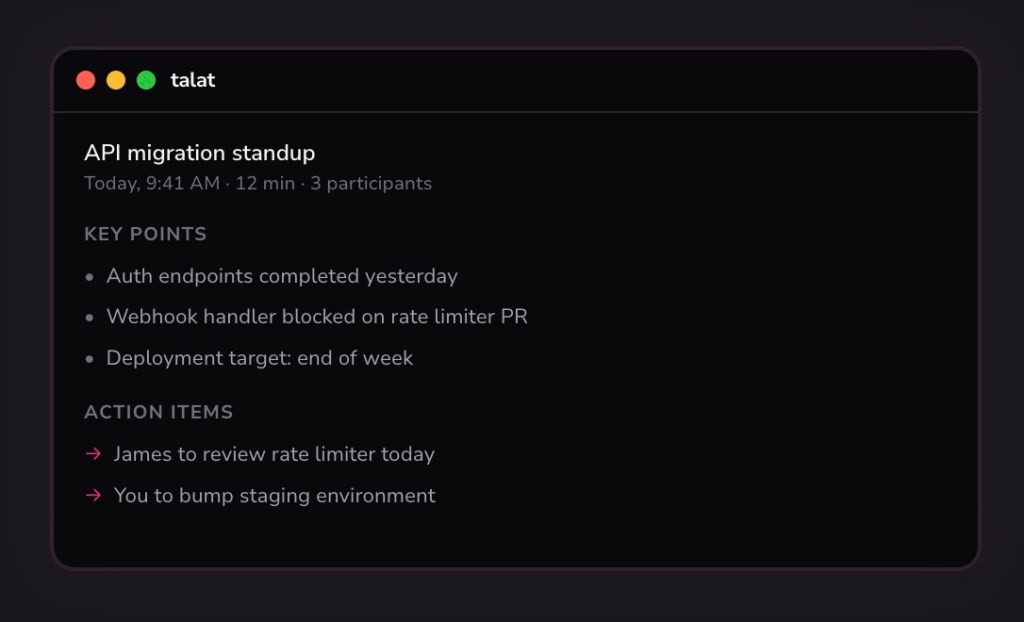

Figure 1. Cloud GPU Savings: Maximizing Efficiency for Agile Teams.

Cast AI, the developer of an application performance automation platform, recently released a report exploring the changing economics of cloud GPU computing using Nvidia’s A100 and H100 GPUs. The report examines real-world pricing and availability across the top three cloud providers: Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP). Figure 1 shows Cloud GPU Savings: Maximizing Efficiency for Agile Teams.

Laurent Gil, president and co-founder of Cast AI, noted that while a few major players—such as OpenAI, Meta, Google, and Anthropic—still lead in model training, smaller startups are increasingly concentrating on inference workloads that deliver immediate business impact.

“What we’re seeing now is that the real business of AI lies in inference,” Gil said. “This represents a shift from hype to reality.”

Cast AI’s analysis revealed that the cost of a high-demand AWS H100 GPU Spot Instance (p5.48xlarge) dropped dramatically—by as much as 88% in one region, falling from $105.20 in January 2024 to just $12.16 by September 2025. In Europe, H100 pricing fell up to 48%, with nearly double the efficiency during peak usage windows.

The trend highlights a shifting GPU landscape: while cutting-edge chips like Nvidia’s GB200 Blackwell processors remain extremely scarce, older models such as the A100 and H100 are becoming more affordable and accessible. However, customer behavior doesn’t always align with practical needs. “Many are buying the newest GPUs out of FOMO—the fear of missing out,” Gil noted. “ChatGPT itself was built on older architecture, and nobody complained about its performance.”

Gil stressed that effective management of cloud GPU resources now demands agility—both operationally and geographically. Spot instance availability can fluctuate by the hour or even minute, and supply varies across data center regions. Enterprises that dynamically shift workloads between regions—often leveraging AI-driven automation—can cut costs by up to 80%.

“If you can move workloads to where GPUs are cheap and available, you pay five times less than a company that can’t move,” he said. “Humans simply can’t react that fast; automation is essential.”

While Cast AI conveniently offers a solution for this kind of automation, Gil’s point stands broadly: if spot pricing is lower in another region, moving workloads there is key to keeping cloud costs down.

Gil concluded by urging engineers and CTOs to prioritize flexibility and automation over being tied to fixed regions or infrastructure providers. “If you want to win this game, you have to let your systems self-adjust and locate capacity where it exists. That’s the key to making AI infrastructure sustainable.”

Source: NETWORK WORLD

Cite this article:

Priyadharshini S (2025), Real GPU Cost Savings in the Cloud – Perfect for Agile Teams, AnaTechMaz, pp.182