AWS Supports Enterprise AI Growth While Upholding Data Sovereignty

AWS Introduces AI Factories to Help Enterprises Run AI on Premises

Enterprises handling sensitive data often face a choice: pay for costly on-premises AI infrastructure or navigate complex data sovereignty and compliance rules in the cloud. AWS is now offering a third option with AI Factories, a fully managed on-premises AI infrastructure solution.

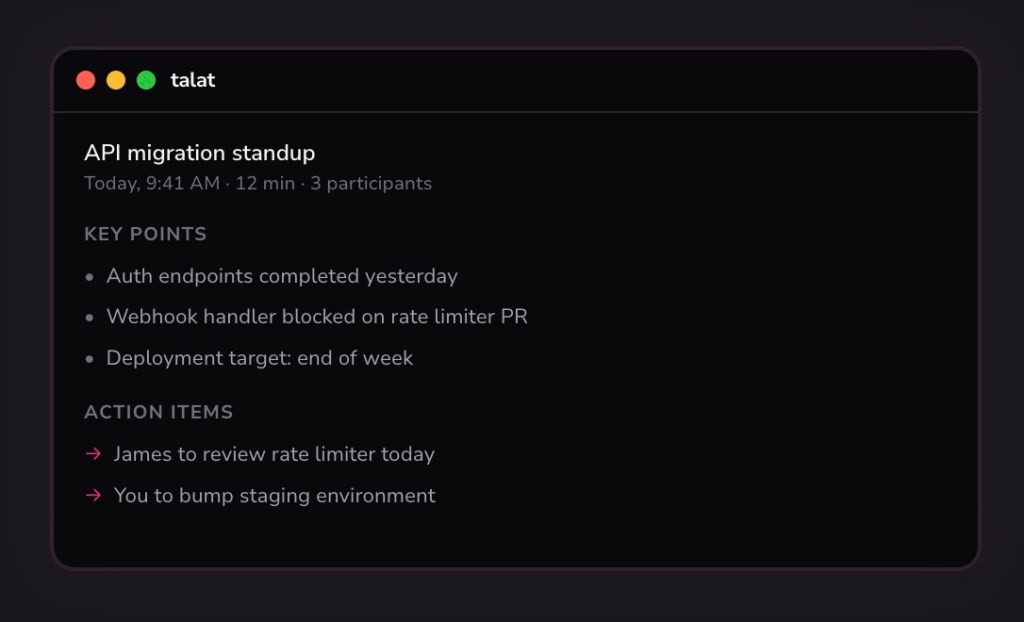

Figure 1. AWS Enables AI Expansion While Protecting Data Control.

Similar to AWS Outposts for traditional compute, AI Factories installs dedicated hardware and software directly in a customer’s data center, enabling AI and agentic applications while keeping data fully under the customer’s control. Figure 1 shows AWS Enables AI Expansion While Protecting Data Control.

“With this launch, we’re enabling customers to deploy dedicated AI infrastructure for AWS in their own data centers for exclusive use,” said CEO Matt Garman at AWS re:Invent.

AWS AI Factories Bring Cloud-Powered AI On Premises

AWS AI Factories offers enterprises a fully managed, on-premises AI infrastructure with dedicated hardware and software, including Nvidia’s latest GPUs, AWS Trainium chips, high-performance networking, and services like SageMaker and Bedrock.

CEO Matt Garman described it as a “private AWS region,” allowing organizations to leverage their own data center space and power while enjoying cloud-like elasticity—all under full control.

The solution is designed to help enterprises navigate AI adoption challenges complicated by data sovereignty and regulatory compliance, according to HyperFRAME Research analyst Stephen Sopko.

AWS AI Factories Targets Sovereign AI Market with On-Premises Cloud Capabilities

“The AWS AI Factory seeks to resolve the tension between cloud-native innovation velocity and sovereign control. Historically, these objectives lived in opposition,” said CEO Matt Garman. “CIOs faced an unsustainable dilemma: choose between on-premises security or public cloud cost and speed benefits. This is arguably AWS’s most significant move in the sovereign AI landscape.”

While on-premises GPUs are not new—Oracle’s Cloud@Customer, Microsoft’s Azure Local, and Google Distributed Cloud already offer managed GPU solutions—AWS AI Factories differentiates itself by combining dedicated hardware and software, including Nvidia GPUs, AWS Trainium chips, and services such as SageMaker and Bedrock, into a fully managed offering.

The service will also compete with other on-premises AI platforms, such as Nvidia’s AI Factory, Dell’s AI Factory stack, and HPE’s Private Cloud for AI, all built around Nvidia GPUs and networking.

HyperFRAME Research analyst Stephen Sopko highlighted AWS’s advantage: “The secret sauce is the software, not the infrastructure.” AWS’s integration of hardware and cloud-native software gives it a maturity edge in operationalizing AI on-premises.

Source: NETWORK WORLD

Cite this article:

Priyadharshini S (2025), AWS Supports Enterprise AI Growth While Upholding Data Sovereignty, AnaTechMaz, pp.181